Scams have changed a lot in recent years. Earlier, they were fairly easy to spot because of poorly written messages, random calls, or obvious tricks.

But today, the game has shifted completely. With the rapid rise of artificial intelligence, scammers have become far more advanced.

They can now create incredibly realistic voices, videos, and messages that are very difficult to question at first glance.

In India, we are already seeing several cases where people lost lakhs of rupees simply because they trusted what they saw or heard on their screens.

Understanding how these high-tech scams work is the first and most important step to staying safe in a digital world.

What are AI Scams?

AI scams are a type of fraud where criminals use artificial intelligence tools to make their tricks look believable.

Instead of manually writing generic messages or making cold calls, they use AI to generate content that sounds natural and convincing.

This includes everything from cloned voices and deepfake videos to highly personalised messages tailored to the victim.

The biggest problem with AI scams is that they remove the usual warning signs we look for.

Because the communication feels so genuine, it is much easier for people to lower their guard and respond to a request.

Types of AI Scams

AI scams can take many different forms depending on how the technology is being used. Here are some of the most common ones we are seeing today.

-

Deepfake Video Scams

In this type, scammers use AI to create fake videos of real people. These might be famous business leaders, celebrities, or well-known public personalities.

These videos are often used to promote get-rich-quick investment schemes. Since the person in the video looks and moves like the real person, many assume the offer is genuine.

This is dangerous because we tend to trust visual proof more than text.

-

AI Voice Cloning Scams

Scammers can now copy a person’s voice using just a few seconds of recorded audio. They use this cloned voice to call family members or colleagues.

Then they ask for urgent money transfers.

The voice sounds exactly like someone you know and love. This makes it very difficult to doubt the request in the heat of the moment.

-

AI Investment Scams

These scams combine AI-generated content with fake trading platforms.

Scammers blast out messages that claim a new AI-based system can generate massive returns with no risk.

They often show fake profit dashboards to build your confidence. Once you try to withdraw your money, they ask for taxes or simply block you.

-

AI Phishing Scams

Traditional phishing emails used to have obvious spelling mistakes.

With AI, these messages are now perfectly written and highly personalised.

Scammers can create emails that look exactly like they came from your bank or a government agency.

These often contain links to fake websites designed to steal your sensitive data.

-

AI Chatbot and Romance Scams

AI chatbots are now used to maintain long, emotional conversations with victims. These bots respond naturally and build a connection over several weeks.

Once trust is firmly established, the scammer asks for money.

This could be for a fake emergency or travel costs. Because the interaction feels so genuine, victims rarely suspect they are talking to a machine.

Real AI Scam Cases in India

These are documented incidents from across India where AI technology was used to cause significant financial loss.

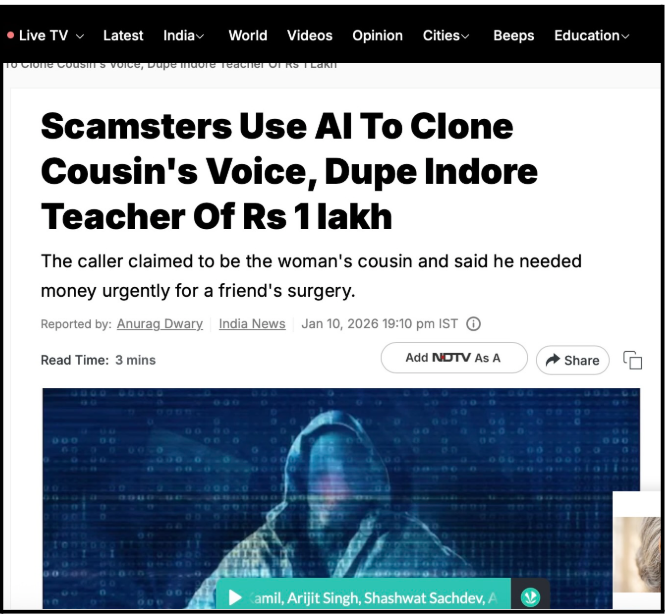

1. The Indore Teacher’s AI Voice Cloning Nightmare

This recent case from Indore is a chilling example of how AI can turn our trust against us.

Smita, a schoolteacher, received a late-night call that sounded exactly like her cousin, right down to his specific tone and way of speaking.

The caller claimed a friend had suffered a heart attack and desperately needed money for emergency surgery.

Because the voice was so perfectly cloned, Smita did not suspect a thing and quickly transferred nearly ₹1 lakh via QR codes to help.

It was only the next morning, after her cousin denied ever calling, that she realised she’d been targeted by advanced voice-modulation technology.

This incident was one of the first confirmed AI-driven frauds in Madhya Pradesh.

This proves that hearing a familiar voice is no longer enough to guarantee someone’s identity.

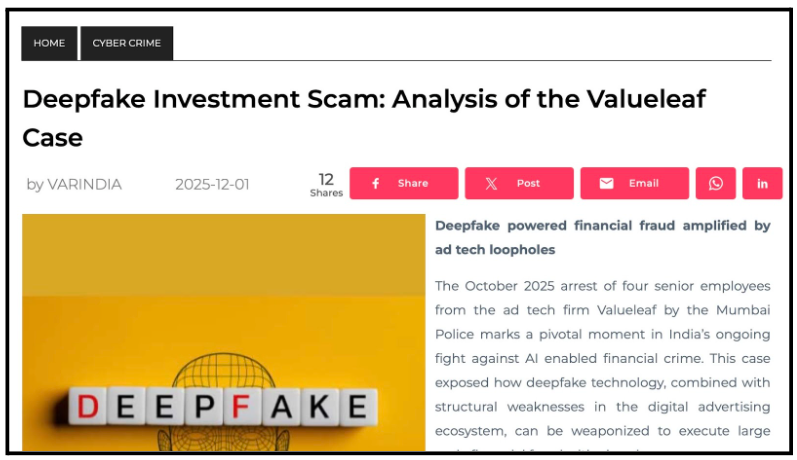

2. The Valueleaf Deepfake Investment Mega-Scam

In 2025, a major scam in Mumbai involved four executives from a trusted ad company called Valueleaf.

These insiders allegedly sold their special advertising access to a foreign criminal group for over ₹1.6 crore.

The company was an official partner of Meta. So, the scammers could easily bypass the usual security filters on social media.

They flooded Facebook and Instagram with high-quality deepfake videos that looked incredibly real.

These videos used the cloned faces and voices of famous stock analysts and news anchors to trick people.

Even experienced traders were fooled into joining exclusive WhatsApp investment groups.

Once people joined these groups, the scammers showed them fake dashboards filled with made-up profits.

This convinced many victims to deposit huge amounts of money into mule bank accounts.

Even when Meta flagged the ads as suspicious, the insiders reportedly doubled the number of active accounts to keep the fraud going. This case is a massive wake-up call.

This proves that even verified ads on social media can be faked using AI.

3. AI Trading Scam in Bengaluru

In this case, from Bengaluru, a 79-year-old woman was cheated of ₹35 lakh through a high-tech deepfake trap.

It all started when she saw a video on social media. The video features Narayana Murthy, the famous co-founder of Infosys.

Because the video was a perfectly created deepfake, his face and voice looked exactly like the real person. This made the investment opportunity feel safe and legitimate.

After she signed up, advisors contacted her through WhatsApp and guided her through the process.

They showed her fake profit numbers on a digital dashboard to win her trust.

Encouraged by these gains, she kept transferring more money over several weeks. When she finally tried to withdraw her funds, the scammers blocked her and vanished.

This tragedy shows that scammers are now using AI to steal the credibility of famous leaders.

How to Avoid AI Scams?

Staying safe from these advanced scams requires a higher level of awareness.

Since these tricks look so real, you have to rely on strict verification habits.

-

Always Verify Through a Second Channel

If you get a call or message asking for money, do not act on it immediately.

Hang up and contact the person directly using a number you already have saved.

For example, if your boss calls from an unknown number, call their official number back. This one simple step can break almost any scam.

-

Do Not Trust Audio or Video Blindly

In the past, hearing a voice or seeing a face was enough to prove someone’s identity.

That is no longer true today. AI can create very realistic fakes.

Treat every unexpected financial request with caution, even if the person looks genuine.

-

Take Time Before Making Decisions

Scammers always try to create a sense of urgency. They might claim there is an emergency or a once-in-a-lifetime opportunity.

Pause for a moment and think.

Taking just five minutes to breathe can help you spot a red flag.

-

Be Sceptical of Investment Offers

If an investment promises guaranteed or unusually high returns, it is almost certainly a scam.

Always verify financial platforms through official government sources before you commit any money.

Do not trust a video endorsement alone.

-

Avoid Clicking Unknown Links

AI-generated phishing messages are very well-written.

Before you click any link, check the URL very carefully for tiny mistakes.

If anything feels slightly off, do not proceed with the click.

-

Limit Your Digital Footprint

The more audio and video you share publicly, the easier it is for scammers to clone you.

Avoid sharing unnecessary personal details or voice recordings on public social media profiles.

-

Use Strong Security Practices

Always enable two-factor authentication (2FA) on your accounts. Use unique, strong passwords for every site.

Keeping your apps and devices updated also helps reduce the risk of being hacked.

How To Report AI Scams?

If you do become a victim of an AI scam, acting quickly is your best chance at recovering your funds.

1. Organise Your Evidence

Before you report anything, you must collect your proof. Save all video or voice recordings.

If it is an investment scam, take clear screenshots of all WhatsApp chats, the website, and your app dashboard showing your balance.

Most importantly, save all bank transfer receipts and transaction IDs (UTR numbers).

Put these in a separate folder so you are ready to upload them.

2. File Cybercrime Complaint Online

Visit the cybercrime portal and file an official complaint. Provide every detail you have, including transaction records, screenshots, and chat history.

Explain exactly how the scam happened from start to finish. Good evidence makes it much easier for the authorities to act.

3. Call the Cybercrime Helpline

If the fraud has just happened, dial the cybercrime helpline immediately. This helpline is for urgent cases.

If you report it fast enough, the police can sometimes freeze the transaction before the money leaves the banking system.

4. Inform Your Bank or Payment App

Contact your bank or payment service as soon as you can. Ask them to flag the transaction as fraudulent.

In some rare cases, early reporting might help them stop the transfer. Share all details like the time, amount, and recipient info.

5. File a Police Complaint

Visit your local police station and file a physical FIR, especially for large amounts. Bring all your printed evidence, like payment proofs and call logs.

This creates a formal legal record of the crime.

6. Report on the Platform Used

If the scam happened on WhatsApp, Instagram, or YouTube, report the account.

This helps the platforms take down fraudulent content so others do not get scammed.

Just remember to save your evidence before the content is deleted.

Need Help?

If you have lost money in an AI scam, we know how stressful it can feel.

We are here to help you organise your case properly and gather the right evidence.

You can check our cybercrime fraud response plan for more clarity on reporting the crime to the authorities.

Instead of trying to handle this alone, you will get a clear plan on what to do next.

This improves your chances of seeing action taken and helps prevent further loss.

Conclusion

AI scams are growing quickly because they are so difficult to detect. They look real, they sound real, and they feel real to our brains.

But the motive behind them has not changed. Scammers just want your trust so they can take your money.

The best way to stay safe in this new era is to slow down, verify everything, and stay alert.

In today’s digital world, trusting blindly is a huge risk.

A little bit of caution can go a long way in keeping your money safe.